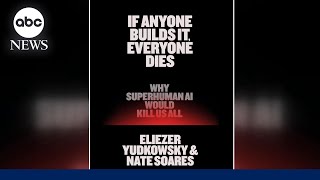

Web Reference: If Anyone Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All (published in the UK with the alternative subtitle The Case Against Superintelligent AI) is a 2025 book by Eliezer Yudkowsky and Nate Soares which details potential threats posed to humanity by artificial superintelligence. Sep 16, 2025 · In this urgent book, Yudkowsky and Soares walk through the theory and the evidence, present one possible extinction scenario, and explain what it would take for humanity to survive. The world is racing to build something truly new under the sun. And if anyone builds it, everyone dies. Oct 14, 2025 · Their assumption is that a superintelligent AI will show no sign of evil until a single do-or-die conflict is instigated. Humans, though, often test defenses in detectable ways.

YouTube Excerpt: Eliezer Yudkowsky is an

Information Profile Overview

Superhuman Ai Will Kill Us - Latest Information & Updates 2026 Information & Biography

Details: $5M - $14M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 2, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.