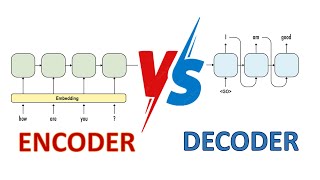

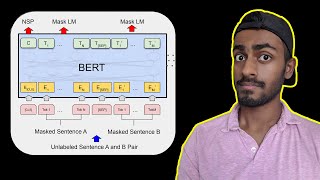

Web Reference: Bidirectional encoder representations from transformers (BERT) is a language model introduced in October 2018 by researchers at Google. [1][2] It learns to represent text as a sequence of vectors using self-supervised learning. It uses the encoder-only transformer architecture. Jul 23, 2025 · Encoder-only models excel through bidirectional attention and parallel processing, making them the go-to choice for understanding and analysis tasks. I’ll also argue they are relatively faster... Dec 21, 2024 · In this in-depth blog post, we will walk you through every facet of ModernBERT, from architecture and training procedures to downstream evaluations, to potential use cases and limitations.

YouTube Excerpt: Encoder

Information Profile Overview

Encoder Only Transformers Like Bert - Latest Information & Updates 2026 Information & Biography

Details: $34M - $62M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 5, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.