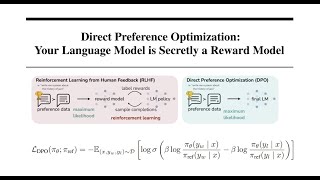

Web Reference: Apr 13, 2024 · Commonly referred to as DPO, this method of preference tuning is an alternative to Reinforcement Learning from Human Feedback (RLHF) that avoids the actual reinforcement learning. In this blog post, I will explain DPO from first principles; readers do not need an understanding of RLHF. Feb 27, 2026 · Learn how to use direct preference optimization technique to fine-tune Azure OpenAI models. Sep 7, 2025 · What is DPO (Direct Preference Optimization)? D irect Preference Optimization (DPO) is a novel and groundbreaking approach in the field of language model alignment, designed to...

YouTube Excerpt: Direct Preference Optimization

Information Profile Overview

Direct Preference Optimization Dpo Explained - Latest Information & Updates 2026 Information & Biography

Details: $64M - $82M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 4, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.